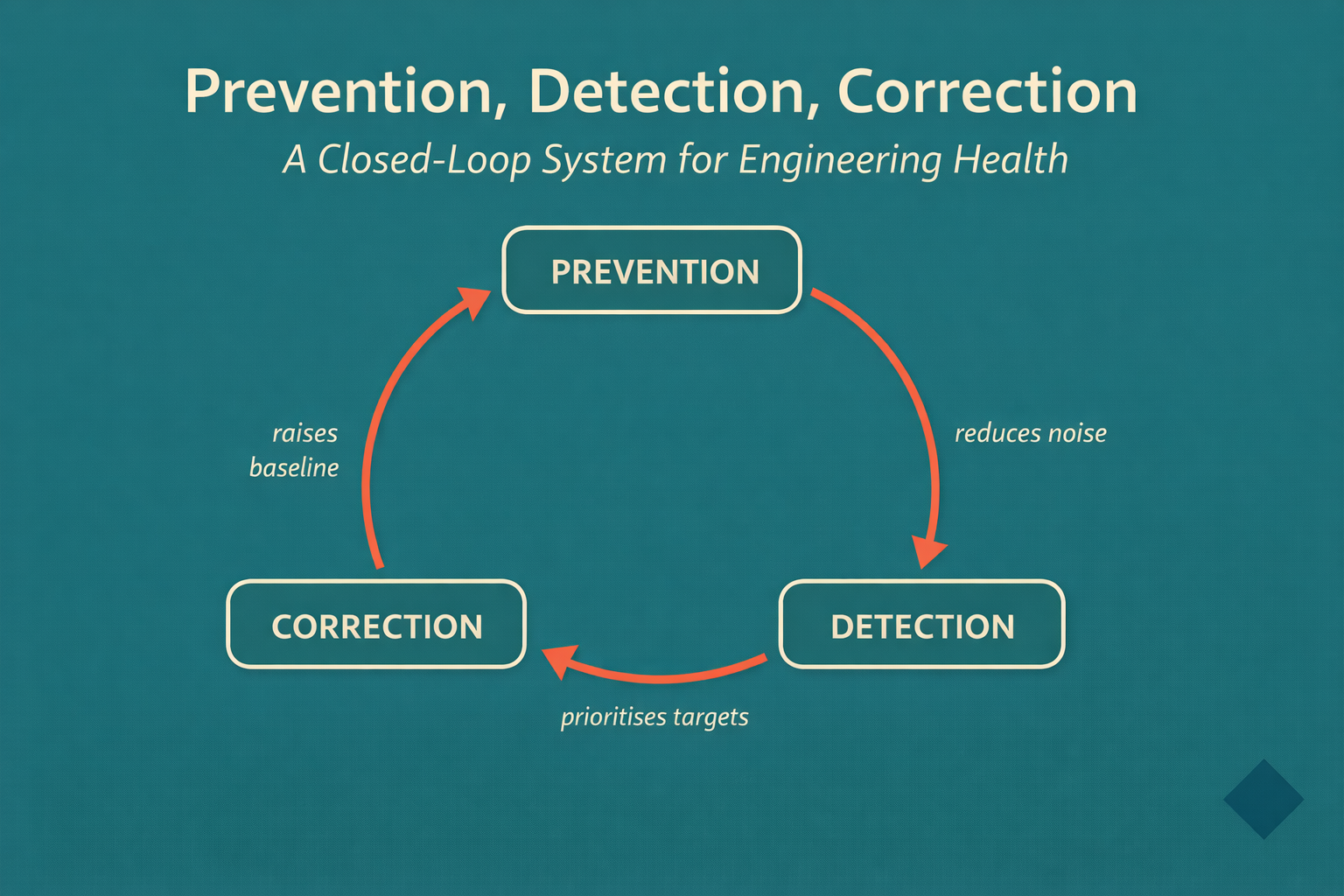

Prevention, Detection, and Correction are three connected phases that form a closed loop for engineering health. Prevention builds quality in before defects form — through TDD, Clean Architecture, and enforced workflows. Detection measures process and code health to surface root causes, not just symptoms. Correction translates that diagnosis into prioritised action. Each phase reduces the work the next must do, creating a self-reinforcing system where the floor rises with every iteration.

Most engineering teams run a reactive cycle: build fast, catch problems when they're painful, fix what's on fire. That cycle keeps you busy. It doesn't make you better.

The industry has been patching symptoms for years. DORA metrics to track delivery performance. Static analysis to flag code smells. Code reviews to catch architecture violations. Each tool delivers signal. None of them close the loop.

Prevention, Detection, and Correction are three connected phases — not three separate tools — that form a closed-loop system for engineering health. The phases only work together. Prevention without Detection is flying blind. Detection without Correction is a dashboard you stop looking at. Correction without Prevention restores the baseline you just cleaned up, repeatedly.

Here's what the loop looks like, and why each phase makes the others stronger.

The Reactive Default — and Why AI Makes It Worse

Before describing the loop, let's be honest about what most teams are actually doing.

The default pattern: engineers build features, tests get written (sometimes), code gets reviewed (eventually), and problems surface in production. The team responds to incidents, allocates sprint capacity to "fix debt," and the cycle continues. DORA 2024 reports an industry average change failure rate of 30–45% and mean time to restore above 22 hours. These aren't outliers. They're the norm.

This was survivable at human coding speed. Violations happened slowly. Code review could catch most problems before they compounded. A senior engineer could hold the architecture together through diligence.

That calculus breaks with AI. GitHub's 2025 data shows 46% of code in Copilot-enabled repositories is now AI-generated. AI doesn't write slower when the architecture is messy — it writes faster, and it replicates whatever patterns it finds. One boundary violation becomes dozens within a sprint. One anti-pattern becomes the template.

DORA 2024 showed this empirically: AI adoption showed a negative relationship with delivery stability for teams that hadn't addressed their foundational practices. Speed went up. Quality went down. The reactive cycle accelerated.

The problem isn't the AI. The problem is running a reactive quality model at AI speed.

Phase 1: Prevention — Quality Built In From the First Keystroke

Prevention is the upstream phase. Its job is to reduce the population of defects, debt, and violations before they form — not to catch them afterward.

Most quality investments are downstream: code review after implementation, QA after development, monitoring after deployment. Each catches problems that shouldn't have existed. Prevention moves the intervention upstream, to the point where code is being written.

Three practices form the core of Prevention:

Test-Driven Development as design pressure. TDD's Red-Green-Refactor cycle does something that post-hoc testing cannot: it applies design pressure during implementation. When you write the test first, a class that's hard to test signals coupling, unclear responsibilities, or missing boundaries. That signal arrives before the code exists, when fixing it costs nothing. As we covered in our TDD post, the failing test is a specification, not a verification step. The design pressure is the value.

Clean Architecture boundaries as constraint mechanisms. Clear architecture boundaries — dependencies pointing inward, domain isolated from infrastructure, modules exposing explicit public APIs — are the structural constraints that make AI generation reliable. An AI agent working in a codebase with enforced boundaries has a constrained solution space. It puts new code in the right layer, depends on the right interfaces, and stays isolated from infrastructure — not because it "understands" architecture, but because the boundaries make violations impossible to ship. As we explored in Clean Architecture in the Age of AI, pipeline enforcement is now a prerequisite for safe AI-assisted development.

Workflow gates that enforce sequence. Quality built-in requires that quality checks happen before, not after, each stage. The Prevention platform enforces this structurally: no implementation begins without an approved plan. No code reaches integration without passing unit and component tests. No deployment proceeds without contract verification and system tests passing. Each gate is a structural constraint — not a guideline, not a suggestion.

The result of these three practices working together: change failure rates in the 0–15% range instead of the industry average of 30–45%. Not through heroics — through structure.

Figure 1: The closed loop. Each phase reduces the work the next phase must do.

Phase 2: Detection — Diagnosis, Not Just Metrics

Prevention reduces the rate at which problems form. It doesn't eliminate them. Code still degrades, dependencies still creep, test suites still lose effectiveness over time. Detection's job is to surface root causes — not just symptoms.

Most teams have metrics. DORA metrics tell you delivery is slow. Code coverage tells you tests exist. Error rates tell you production is unhealthy. What most teams don't have is a diagnostic layer that connects process symptoms to code-level causes.

Consider a team with a 17-day lead time. DORA tells you you're slow. But why? Is it slow code review because changes are too large? Is it large changes because the architecture forces a wide blast radius? Is it a wide blast radius because there are no boundaries separating modules? Each root cause requires a different fix — and DORA alone can't tell you which one it is.

Detection measures across four dimensions of code health:

Architecture: Boundary violations, dependency direction, layering. A codebase where the domain layer imports database clients has an architecture health problem that compounds every sprint — and becomes catastrophic when AI agents start generating against it.

Complexity: Cyclomatic complexity, cognitive load, nesting depth. High complexity correlates directly with change failure rate. Complex files require more cognitive effort to change correctly, generate more defects per change, and resist refactoring.

Maintainability: Coupling, cohesion, duplication, naming quality. Tightly coupled code forces large, risky changes. Cohesive, well-named code supports small, targeted ones.

Test effectiveness: Mutation score, not just coverage. A test suite at 90% line coverage can miss 60% of the logic faults in the codebase. Mutation testing surfaces which tests are actually verifying behaviour versus which tests are passing alongside the code without testing it.

These four dimensions combine into a single Health Score (0–10), with 7.0+ indicating Elite DORA performance potential. The score also drives an AI Readiness assessment: a codebase below 5.0 is high-risk for AI agent deployment. Agents will generate code faster into broken architecture, creating defects faster than any review process can contain.

Detection also tracks debt density — technical debt measured in points per 1,000 lines of code (pts/kLOC). This makes debt comparable across codebases and trackable over time. A codebase degrading from 31.2 pts/kLOC to 78.9 pts/kLOC over six months tells a story. The direction of travel matters as much as the current score.

The key shift Detection represents: you're not monitoring outcomes, you're diagnosing causes. Outcomes only tell you when something went wrong. Causes tell you where to intervene before it does.

Figure 2: Four dimensions of code health combined into a Health Score that predicts DORA performance and AI readiness.

Phase 3: Correction — Acting on What You Know

Detection surfaces root causes. Correction is the system by which those root causes get fixed.

This sounds obvious. In practice, most teams have Detection and not Correction. They have dashboards showing code health scores, debt accumulation, and DORA trends — and separate sprint processes where "tech debt work" competes with feature work and consistently loses. The gap between "we know this is a problem" and "we're systematically addressing it" is where most engineering health initiatives die.

Detection without Correction is shelfware.

Effective Correction requires three things working together:

Prioritisation by impact, not by pain. The instinct is to fix what hurts most right now — the module causing the most incidents, the class everyone avoids. The right approach is to fix what has the highest impact on the metrics that matter. Debt density by module, cross-referenced with frequency of change and complexity, identifies the highest-leverage refactoring targets. A module with high debt density that's changed every sprint delivers far more value to fix than one with the same density that hasn't been touched in a year.

Safe refactoring workflows. The reason teams accumulate debt instead of addressing it is risk. Touching complex, poorly-tested code to refactor it can break behaviour nobody fully understands. Characterisation tests solve this: before refactoring any legacy code, you write tests that capture current behaviour — not intended behaviour, actual current behaviour. These tests form a safety net that catches regressions during the refactor. The change failure rate for refactoring with characterisation tests approaches zero. Without them, it's high enough that most teams won't try.

Progress measurement in health units. "We allocated 40 story points to tech debt this sprint" tells you how much effort went in. It doesn't tell you whether the codebase improved. Measuring debt density before and after correction cycles, tracking Health Score movements, and correlating code health changes with DORA metric improvements connects the work to the outcome. When you can show that addressing two architecture violations reduced lead time by two days, the next round of correction work doesn't need to fight for budget.

The Correction phase also closes the feedback loop back to Prevention. When correction surfaces recurring patterns — the same architecture violation appearing across three different modules, test suites that consistently lose effectiveness under pressure — those patterns become inputs for strengthening Prevention's workflow gates. The platform learns where discipline needs to be tightened before the next defect forms.

Why It's a Loop, Not a Pipeline

The three phases are described sequentially, but the value isn't in the sequence — it's in the loop.

Prevention reduces the volume of problems that Detection must catch. A team building with TDD and enforced boundaries generates far fewer architecture violations, complexity spikes, and test effectiveness gaps than a team building without them. Detection has less noise to filter, and the signal it surfaces is higher value.

Detection makes Correction more effective. Without a diagnostic layer, correction is driven by instinct — fix what's loudest. With Health Score data, debt density maps, and root cause correlation, correction is driven by evidence. The highest-leverage fixes get done first.

Correction improves the baseline that Prevention protects. When correction addresses a systemic pattern — a consistent tendency for use cases to reach into infrastructure directly, for instance — that insight strengthens the Prevention gate that catches it. The architecture fitness function gets tighter. The constraint space for AI generation becomes more precise. Future Prevention outputs start from a cleaner baseline.

This compounding effect is especially significant with AI. AI agents amplify whatever patterns exist. In a closed-loop system with a rising health baseline, AI amplifies quality — generating clean code into clean architecture. In a reactive system with a degrading baseline, AI amplifies chaos. The difference between teams that get extraordinary value from AI and teams that get accelerated debt is whether they have the loop running.

How to Start the Loop

You don't need the full platform to start. The loop has a natural entry point.

Start with Detection. Before you can improve, you need to know where you are. Connect your repository and run a health scan. Get your Health Score, debt density, and DORA baseline. The scan takes hours, not weeks. It reveals the highest-priority correction targets and shows whether your codebase is ready for AI agent deployment.

Run one Correction sprint. Take the top two or three issues from Detection — the modules with the highest debt density that are changed most frequently. Write characterisation tests before touching them. Refactor to address the specific issue Detection flagged. Measure Health Score before and after.

Add one Prevention gate. Based on the patterns Correction surfaces, add one automated check that runs on every commit: an import restriction, a complexity threshold, or a mandatory test pyramid check. Make violations fail the build. This converts a recurring problem from something you fix repeatedly into something the pipeline prevents.

Measure the loop. Track Health Score, debt density, and lead time across the iteration. Show the correlation between code health improvement and delivery performance. This is the feedback signal that calibrates the next iteration and builds the case for the next investment.

The loop doesn't require a big-bang transformation. It requires connecting one phase to the next, one sprint at a time.

The Bottom Line

The reactive cycle — build fast, catch problems late, fix what's on fire — persists because it requires no coordination between phases. Each phase is local: developers write code, monitors catch failures, engineers fix bugs. Nothing connects.

The closed loop requires connecting the phases deliberately: Prevention's outputs become Detection's baseline; Detection's findings become Correction's inputs; Correction's patterns become Prevention's constraints. That connection is what transforms a collection of engineering tools into a system that gets better over time.

Prevention is cheaper than detection. Building it right is faster than fixing it later. And with AI generating nearly half of all code, the team that runs the loop determines whether AI compounds their quality or compounds their chaos.