TDD is a design tool, not a testing strategy. The Red-Green-Refactor cycle shapes architecture before implementation — each failing test forces decisions about API surface, dependency boundaries, and responsibility separation. Teams that treat TDD as verification miss its real value: design feedback that produces naturally decoupled, testable code.

We spent two years building an AI coding agent that ships production code. Along the way, we learned something that changed how we think about testing entirely: the teams that get the most value from TDD aren't using it to test their code. They're using it to design their code.

This distinction isn't semantic. It's the difference between test suites that catch bugs and test suites that prevent them from existing in the first place.

The Misconception That Wastes Millions of Engineering Hours

Here's how most teams practice TDD:

- Write some production code

- Realize they should have tests

- Write tests that verify the code they just wrote

- Call it "TDD" because there are tests

This isn't TDD. It's test-after development with a better label. And it produces a specific kind of test — one that verifies implementation, not behavior.

The result? We analyzed it in our previous post on decorative tests: 80-90% of enterprise test suites would still pass if you deleted the production code they claim to test. These tests execute lines. They don't verify outcomes. They're expensive to maintain and nearly useless at catching defects.

Real TDD — Red-Green-Refactor practiced as Kent Beck described it — is fundamentally different. The test isn't a verification step. It's a design specification written before the code exists.

Where Design Actually Happens: The Red Phase

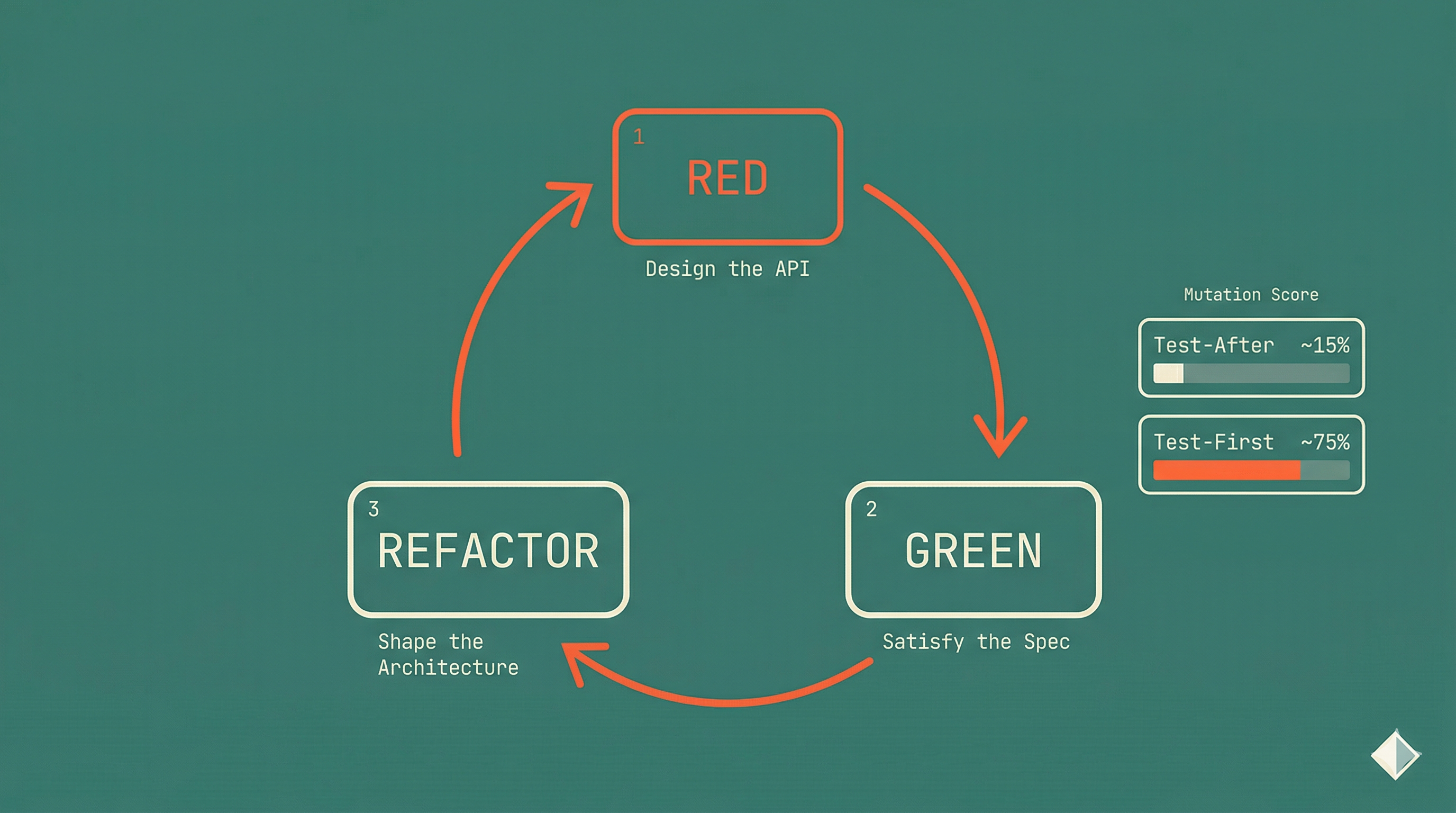

In the TDD cycle, Red-Green-Refactor isn't a testing loop. It's a design loop.

Figure 1: Red-Green-Refactor as a design loop — each phase serves a design purpose, not just a testing one.

Red: Design the interface. Before writing any implementation, you write a test that calls code that doesn't exist yet. This forces you to answer design questions first:

- What is the API surface? What does the caller need to provide?

- What does the function return? What shape does the output take?

- What are the dependencies? Where do the boundaries sit?

- What is the unit of behavior? What single thing does this do?

These are architecture decisions. You're making them with a concrete example — a test case — not in the abstract. The failing test is a specification document that happens to be executable.

Green: Implement the minimum. You write the simplest code that makes the test pass. Not the cleverest code. Not the most performant code. The simplest. This constraint prevents over-engineering and keeps each unit small and focused.

Refactor: Improve the structure. With a passing test as your safety net, you clean up duplication, extract abstractions, and improve naming. The test tells you immediately if you've broken behavior. You're reshaping the design with confidence.

The critical insight: the design decisions happen in Red, before any production code exists. By the time you write implementation, the hard thinking is done.

Design Pressure: When the Test Talks Back

Here's where TDD becomes genuinely powerful as a design tool. When a test is hard to write, that's not a testing problem. It's a design problem.

Figure 2: Test difficulty as a design pressure signal — hard-to-write tests indicate structural problems in the underlying design.

Consider this scenario. You're writing a test for a new feature, and the setup looks like this:

test('should apply discount to order', () => {

const db = new TestDatabase();

const emailService = new MockEmailService();

const inventoryClient = new MockInventoryClient();

const auditLogger = new MockAuditLogger();

const config = { taxRate: 0.08, region: 'US', currency: 'USD' };

const userRepo = new UserRepository(db);

const orderRepo = new OrderRepository(db);

const pricingEngine = new PricingEngine(config, inventoryClient);

const service = new OrderService(

userRepo,

orderRepo,

pricingEngine,

emailService,

auditLogger

);

// ... 15 more lines of setup before the actual test

});

Six dependencies. Fifteen lines of setup. The test is screaming at you: this class does too much.

That's design pressure. And it's invaluable.

In test-after development, you never feel this pressure. The code already exists. You set up whatever the implementation requires, no matter how painful, because the alternative is not having a test. The design feedback disappears.

In TDD, you feel the pressure before writing the implementation. You can respond to it immediately:

- Too many dependencies? The class has too many responsibilities. Split it.

- Complex setup? The boundaries are in the wrong place. Redraw them.

- Need to access internals? The API isn't exposing the right behavior. Redesign it.

- Mocking everything? You're testing implementation, not behavior. Think about what the caller actually needs.

Every one of these signals maps to a well-known design principle. Too many dependencies violates Single Responsibility. Needing internals violates encapsulation. Complex setup often means missing abstractions. TDD surfaces these violations before they become entrenched.

Why This Matters More in the Age of AI Agents

Here's where this stops being an academic distinction.

AI coding agents — Copilot, Cursor, Claude Code, the rest — use your test suite as their primary feedback loop. The agent generates code, runs the tests, and if they pass, it ships. This workflow is powerful when the tests constrain behavior. It's dangerous when they don't.

The 2025 DORA Report found that AI adoption is correlating with higher instability in many organizations. Teams adopting AI coding tools without corresponding improvements in engineering discipline are seeing more incidents, not fewer. One of the primary mechanisms: weak test suites give agents the confidence to ship code that hasn't actually been verified.

Think about what happens when an AI agent works against a test suite built with test-after practices:

- Agent generates a function implementation

- Tests pass — because the tests verify implementation patterns, not behavioral contracts

- Agent introduces a subtle behavioral change in a refactor

- Tests still pass — they were never testing the behavior that changed

- Bug ships to production with full CI confidence

Now think about what happens with TDD-written tests:

- Agent generates an implementation

- Tests constrain the behavior — they were written as specifications before any implementation existed

- Agent attempts a refactor that changes behavior

- Test fails — the specification hasn't changed, but the behavior has

- Agent corrects or escalates

The difference is whether your tests specify what the code should do or what the code currently does. TDD produces the former. Test-after produces the latter.

TDD + Clean Architecture: Where Design Compounds

TDD doesn't just improve individual units. When combined with Clean Architecture, it shapes the entire system's structure.

Clean Architecture defines concentric boundaries: domain at the center, application logic around it, infrastructure at the edge. Dependencies point inward. The core knows nothing about databases, frameworks, or external services.

TDD naturally enforces these boundaries. Here's why.

When you write a test for a use case, TDD forces you to define what the use case depends on — in the test. If your use case test requires a database connection, you feel immediate design pressure. The test is telling you: this use case shouldn't know about databases.

So you introduce an interface. The use case depends on a UserRepository port, not a PostgresUserRepository implementation. Dependency inversion emerges from the testing constraint, not from reading a design patterns book.

// TDD forces this design:

test('should reject duplicate email during registration', () => {

const userRepo: UserRepository = new InMemoryUserRepository();

userRepo.save(createUser({ email: 'alice@example.com' }));

const useCase = new RegisterUser(userRepo);

const result = useCase.execute({ email: 'alice@example.com', name: 'Bob' });

expect(result.isFailure).toBe(true);

expect(result.error).toBe('EMAIL_ALREADY_EXISTS');

});

Notice what happened without any explicit design effort:

- Dependency Inversion:

RegisterUserdepends on aUserRepositoryinterface, not a concrete database. The test used an in-memory implementation. - Interface Segregation:

UserRepositoryonly needs the methods this use case requires. The test makes that visible. - Single Responsibility: The test describes one behavior — rejecting duplicate emails. The implementation follows.

- Testability: The use case is trivially testable because TDD forced clean boundaries from the start.

This isn't coincidence. TDD and Clean Architecture share the same underlying principle: separate what the system does from how it does it. Tests written first naturally express the what. Implementation fills in the how.

The Mutation Testing Connection

If TDD is a design tool, mutation testing is the measurement that proves it.

Mutation testing works by introducing small changes to production code — flipping a condition, changing a return value, deleting a statement — and checking whether tests catch the change. If tests still pass after a mutation, those tests aren't actually verifying the behavior that was mutated.

TDD-written tests consistently produce higher mutation scores. The reason is structural: when you write the test before the code, you're testing the behavior you specified, not the implementation you wrote. The test says "given this input, the output should be X." Any mutation that changes that output gets caught.

Test-after tests, by contrast, tend to mirror the implementation. They assert on intermediate values, verify method calls, or check structural properties of the output. Mutations to the behavioral logic often survive because the test wasn't examining that logic — it was examining the scaffolding around it.

We see this pattern repeatedly across codebases:

- TDD-written modules: Mutation scores of 70-90% on critical paths

- Test-after modules: Mutation scores of 10-30%, despite similar coverage numbers

Same coverage. Dramatically different effectiveness. The difference isn't test quantity — it's whether the tests were written as specifications (TDD) or as verifications (test-after).

What Changes When You Adopt TDD-as-Design

Teams that shift from "TDD as testing" to "TDD as design" report consistent changes across their codebases.

Functions get smaller. When you write one test per behavior before implementation, each function tends to do one thing. There's no temptation to add "while I'm here" logic because there's no test for it yet.

APIs get clearer. The test is the first consumer of your API. If the test reads awkwardly, the API is awkward. You fix it before any other code depends on it.

Coupling decreases. Design pressure catches dependency problems immediately. Instead of discovering tight coupling six months later during a refactor, you feel it in the Red phase and address it before writing a line of production code.

Refactoring gets safer. TDD-written tests verify behavior, not implementation. You can restructure internals freely — the tests care about inputs and outputs, not about how the code achieves them.

Debugging time drops. When a TDD test fails, it tells you exactly what behavior broke. The test is a specification: "given X, expected Y, got Z." There's no mystery about what went wrong.

AI agent output improves. This is the compounding effect. When AI agents work against a TDD-written test suite, the specifications constrain their output. The agent can't ship behavior that violates the specification, even if its implementation looks plausible. The test suite becomes a behavioral contract, not a rubber stamp.

The Uncomfortable Truth

TDD-as-design is harder than test-after. It requires thinking about the problem before solving it. It demands that you define what success looks like before you know how to achieve it. It feels slower at first — because you're doing the design work that test-after development skips.

But that design work doesn't disappear when you skip it. It shows up later as bugs, as refactoring debt, as test suites that pass with broken code, as AI agents shipping defects with confidence.

The 2025 DORA data makes this concrete. Elite-performing teams — those deploying on demand with sub-hour lead times and change failure rates below 5% — don't have more tests. They have better tests. Tests that specify behavior. Tests that catch mutations. Tests that constrain AI agents. Tests written as design tools, not verification steps.

The question isn't whether you can afford to practice TDD. The question is whether you can afford not to — especially when AI agents are amplifying whatever patterns your codebase already has.

Start Here

If you're practicing test-after and want to shift toward TDD-as-design, start small:

- Pick one new feature. Don't try to retrofit existing code. Start with something greenfield.

- Write the test first. Really first. Before any production file exists. Let the test define the API.

- Listen to the design pressure. If setup is painful, simplify the design. Don't push through it.

- Keep Green minimal. Write the simplest code that passes. Resist the urge to anticipate.

- Refactor with confidence. The test is your safety net. Use it.

- Run mutation testing. Compare the mutation score of your TDD-written code against your test-after code. The difference will make the case better than any article can.

The tests are a side effect. The design is the product.