AI coding agents don't create quality — they amplify whatever patterns already exist in your codebase. On clean architecture with comprehensive tests, agents produce reliable code. On messy codebases, agents produce faster mess. The 2025 DORA Report confirms: AI adoption correlates with higher throughput and higher instability.

This isn't a tooling problem. It's a foundation problem.

The Data Is In: Speed Without Discipline Creates Instability

The 2025 DORA Report found that for the first time, AI adoption is linked to higher throughput — teams using AI move work through the system faster. But it also found that AI-heavy teams experience higher instability: more change failures, increased rework, and longer cycle times to resolve issues.

CodeRabbit's analysis of GitHub pull requests tells the same story: AI-authored code creates 1.7x more issues than human-written code. Specifically, 1.75x more logic and correctness errors, 1.64x more maintainability issues, and 1.57x more security findings.

Meanwhile, 84% of developers now use AI tools that write 41% of all code. And 66% of those developers report spending more time fixing "almost-right" AI-generated code than they saved generating it.

The pattern is consistent: AI makes you faster at producing code, but not better at producing working software.

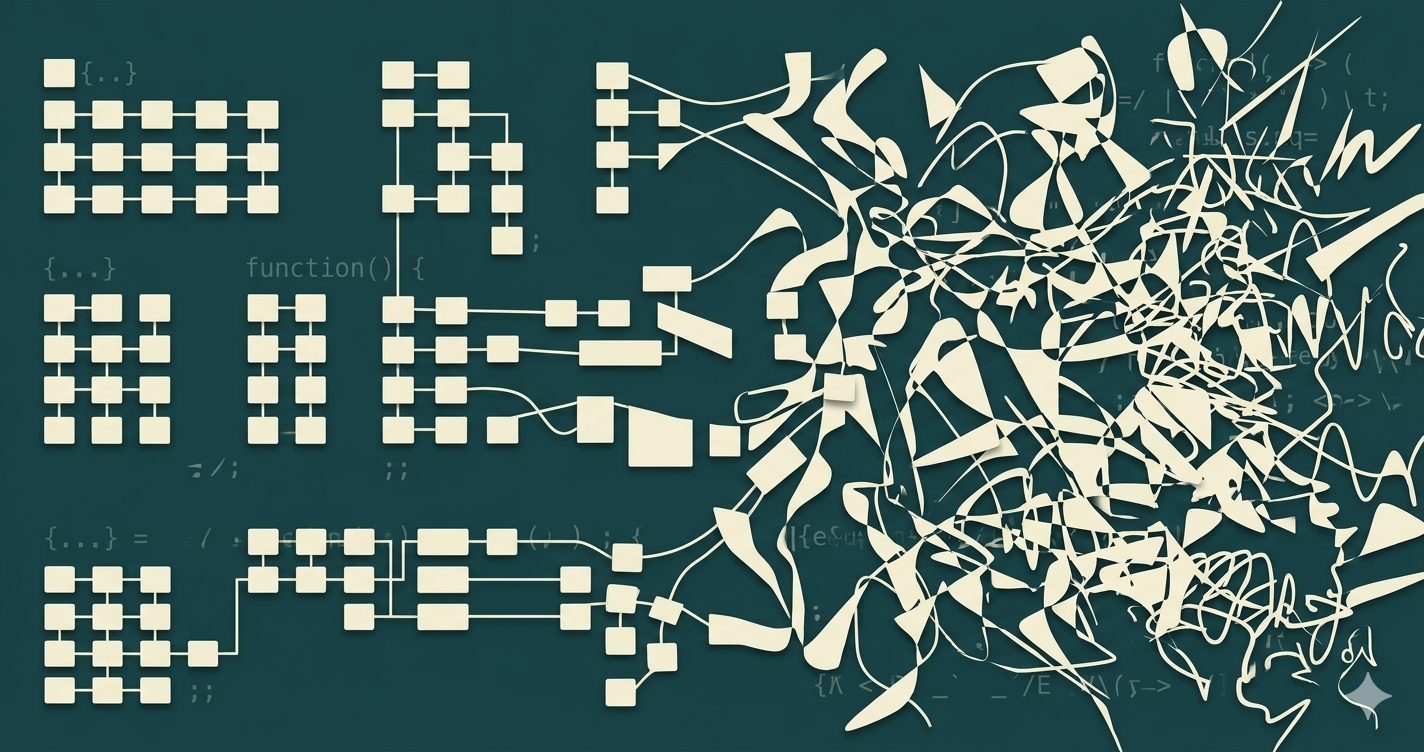

Why AI Amplifies Existing Patterns

To understand why, it helps to look at how AI coding agents actually work — and what they can't do.

An AI agent generates code based on patterns in its training data and the context you give it. It doesn't understand your business domain. It can't infer unstated architectural rules. It doesn't know that your team decided six months ago to keep authentication logic out of the API layer.

What it can do is pattern-match. And the patterns it matches come from your codebase.

Clean patterns in, clean code out

When your codebase has clear module boundaries, consistent naming, well-defined interfaces, and comprehensive tests:

- The agent has strong signals about where code should go

- Tests provide an immediate feedback loop — the agent knows when it broke something

- Architecture boundaries constrain the solution space to correct answers

- Naming conventions make the agent's suggestions predictable and consistent

The agent becomes a reliable pair programmer, operating within guardrails that the codebase itself provides.

Messy patterns in, faster mess out

When your codebase has circular dependencies, god classes, no test coverage, and implicit conventions:

- The agent has no signal about where code belongs

- No tests means no feedback — the agent has no way to know if it broke something

- No architecture boundaries means the solution space is unbounded

- Inconsistent naming means the agent reproduces the inconsistency

The agent becomes a chaos accelerator. Every problem that existed before now happens at 10x the pace.

The Three Amplification Effects

We see three specific ways this pattern amplification manifests in real engineering organizations.

1. Defect amplification — your tests are lying, and AI believes them

Most codebases have what we call "decorative tests" — tests that pass whether the code is correct or not. Run mutation testing on a typical enterprise codebase and you'll find that 80-90% of tests would still pass even if you deleted the production code they claim to test.

When a human developer writes code, the slow pace provides a natural check. When an AI agent writes code, it generates at speed, runs the test suite, sees green, and moves on. If your test suite is decorative, "green" means nothing. The agent ships bugs with the confidence of a passing pipeline.

2. Architectural drift — AI doesn't know your boundaries

Architecture in most organizations exists in people's heads. The senior engineer knows that module X should never depend on module Y. The tech lead knows that database access must go through the repository layer. These rules are rarely codified as automated checks.

AI agents can't read minds. When the agent needs to add a database call, it looks at existing patterns. If three other files bypass the repository layer, the agent learns that direct database access is the convention. It reproduces the anti-pattern consistently and at scale.

3. Velocity illusion — faster production, slower delivery

Here's the paradox: the team is producing more code, but shipping isn't actually faster.

The reason is that code production is not the bottleneck. The bottleneck is the feedback loop — the time from "code written" to "confidently deployed." When AI agents accelerate code production without improving the feedback loop, you get a traffic jam.

GitClear's research found that refactoring activity dropped from 25% of changed lines in 2021 to less than 10% in 2024, while code cloning rose sharply. AI is generating more code, but lower-quality code — duplicated rather than abstracted, added rather than refactored.

The Foundation That Makes AI Work

If AI amplifies existing patterns, the solution isn't to stop using AI — it's to fix the patterns before you amplify them.

Three foundations determine whether AI agents help or hurt:

Foundation 1: Architecture boundaries that constrain AI behavior

When architecture boundaries are explicit and enforced — not documented in a wiki nobody reads, but checked on every commit — AI agents have clear guardrails.

- Clean module boundaries with explicit dependency rules

- No circular dependencies (they confuse agents as much as they confuse humans)

- Interface-based design so agents know the expected contracts

- Architecture fitness functions that break the build when violated

Foundation 2: Tests that actually verify behavior

The distinction that matters is not coverage, but effectiveness. A test that would still pass if you deleted the production code it tests is a decorative test.

- Mutation testing to measure test effectiveness (not just coverage)

- Tests written from specifications, not from implementation

- A test pyramid that catches defects at the cheapest level

- Contract tests between services so agents can't silently break boundaries

Foundation 3: Explicit specifications that define "done"

AI agents are extraordinarily good at generating code that satisfies tests. The question is whether those tests capture what you actually want.

- Specifications written in problem-domain language before code

- Acceptance criteria that define observable outcomes

- Example mapping that surfaces edge cases and assumptions

- Quality gates that prevent shipping code that doesn't satisfy specs

The Sequence Matters

You cannot skip the foundations and jump straight to AI acceleration. Each missing foundation amplifies the damage from the next.

Without architecture boundaries → agents scatter code everywhere. Without effective tests → the scattered code ships to production. Without specifications → nobody can tell it's wrong until users report bugs.

The organizations that get real value from AI agents are the ones that invest in these foundations first.

How to Know If Your Codebase Is Ready

Before deploying AI agents at scale, ask three questions:

1. What's your mutation score? Run mutation testing on your critical paths. If more than 50% of mutations survive, your test suite is not ready for AI-speed code production.

2. How many circular dependencies do you have? Each circular dependency is a place where AI agents will create unpredictable side effects.

3. Can you deploy on demand? If deploying requires coordination or manual steps, you can't absorb the code velocity that AI agents produce.

The Bottom Line

AI coding agents are the most powerful amplifier engineering teams have ever had. That's what makes them dangerous on weak foundations and extraordinary on strong ones.

The teams that will win aren't the ones that adopt AI fastest. They're the ones that build the foundations that make AI reliable — clean architecture, effective tests, explicit specifications, and fast feedback loops.

The question isn't "Should we use AI agents?" It's "Is our codebase ready for what AI will amplify?"