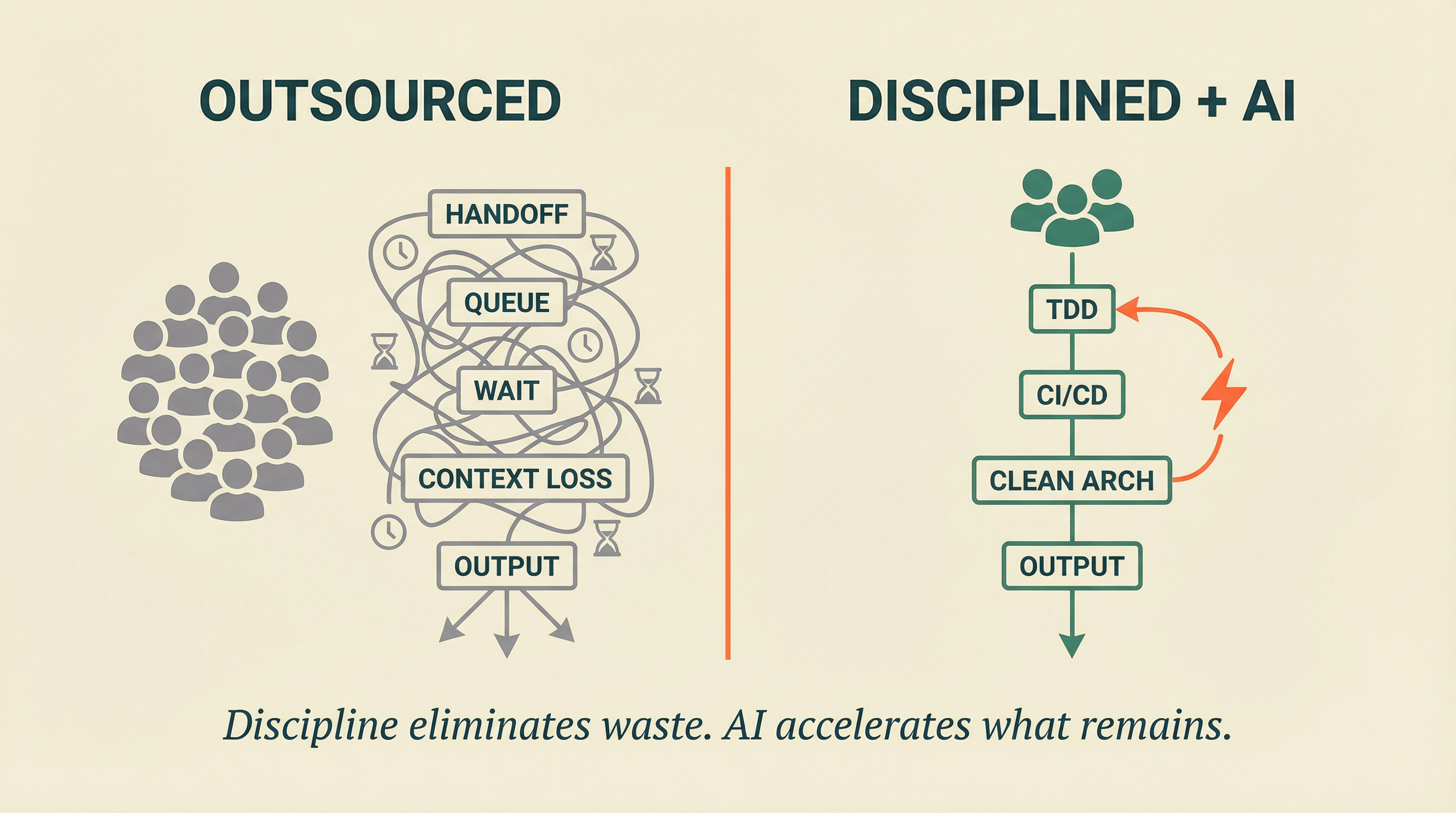

Three disciplined engineers with AI agents consistently outperform 15-person outsourced teams on every delivery metric that matters: lead time, deployment frequency, change failure rate, and mean time to restore. The shift is not about AI replacing developers — it is about eliminating the handoff delays, context loss, and rework cycles that consume the majority of outsourced delivery time.

Here is a number that should make every founder pause: 60-80% of elapsed time in outsourced delivery is not development. It is waiting. Waiting for handoffs between teams. Waiting for QA queues. Waiting for timezone gaps to close. Waiting for change requests to travel through contract boundaries.

You are not paying for 15 developers. You are paying for 3-4 developers and a very expensive waiting system.

AI has not just changed the economics of software development. It has exposed what was always true about outsourcing: most of the cost was never the code.

The Real Cost of 15 Outsourced Developers

The monthly invoice says $80-150K. The actual cost is significantly higher.

Every outsourced feature follows the same pattern. A product manager writes a specification. That specification crosses a contract boundary to a vendor team lead. The team lead assigns it to a developer — often one juggling work across multiple clients. The developer writes code. That code enters a QA queue with a 5+ business day service level. Defects get logged. The developer — who has moved on to another task, possibly for another client — reconstructs context and fixes the issue. The fix re-enters the QA queue. Eventually, a deployment ticket gets filed with a separate operations team.

From "code complete" to "feature in production": 3-6 weeks. From "feature requested" to "customer using it": 6-12 weeks.

Now measure what that actually costs per feature delivered. Not per hour billed. Per feature in production, generating value.

The math is brutal.

What the invoice shows:

- 15 developers at $5-10K/month each = $75-150K/month

- QA team, project management, infrastructure overhead = $20-40K/month

- Total: $95-190K/month

What the invoice hides:

- Handoff delays adding 2-4 weeks per feature (queue time, not work time)

- Context switching costs — developers spread across clients lose 20-40% capacity

- Specification misunderstandings causing 30-50% rework rates

- Knowledge that leaves when developers rotate off your project

- Technical debt accumulating without visibility or accountability

- Incident response measured in days, not minutes

A defect found in the hour after code was written gets fixed in minutes with full context. The same defect found by a separate QA team a week later requires reconstructing context, writing a reproduction case, and waiting for the developer to return to code they no longer remember clearly. That is not a quality process. That is a rework factory.

Why 3 Engineers Ship More Than 15

This is not a story about AI writing code faster. It is a story about eliminating everything that is not writing code.

Three engineers working on a single product with AI agents operate in a fundamentally different model:

No handoffs. The same engineer who understands the requirement writes the code, writes the tests, reviews the architecture, and deploys to production. AI agents handle the mechanical parts — generating test scaffolding, enforcing architecture boundaries, running mutation analysis — while the engineer retains full context.

No queue delays. With CI/CD pipelines enforcing quality gates on every commit, there is no separate QA phase. Tests run in minutes, not days. Deployment happens on merge, not on a ticket.

No context switching. Three engineers focused on one product maintain deep context. They understand the domain, the architecture, the customer. An outsourced developer juggling three clients cannot compete with that depth, regardless of skill level.

No specification telephone. Engineers talk directly to stakeholders. AI agents help translate specifications into executable tests. Misunderstandings surface in hours, not weeks.

Here is what the comparison actually looks like:

The cost difference is striking. But the speed difference is what changes the business. A team that deploys daily can run experiments, respond to customer feedback, and course-correct in real time. A team that deploys quarterly is guessing — and paying a premium for the privilege.

The Discipline Prerequisite

Here is the part that most "AI will fix everything" narratives leave out: this only works with engineering discipline.

AI agents are pattern amplifiers. Point them at a well-architected codebase with clean boundaries, comprehensive tests, and automated deployment pipelines, and they multiply output. Point them at a tangled codebase with no tests, circular dependencies, and manual deployments, and they multiply chaos.

Three engineers without discipline do not outperform 15 outsourced developers. They just produce fewer bugs more slowly.

The discipline stack that makes the math work:

Test-Driven Development. Every feature starts with a failing test. AI agents generate code that passes the test. The test is the specification — not a document that gets stale, not a ticket that gets misinterpreted. If the test passes, the feature works. If it does not, you know immediately.

Clean Architecture. Domain logic separated from infrastructure. Clear boundaries between components. AI agents know where new code belongs because the architecture tells them. Without this, AI-generated code creates the same tangled dependencies that make outsourced codebases unmaintainable.

Continuous Delivery. Every commit goes through automated quality gates — linting, unit tests, integration tests, contract tests, mutation analysis. If it passes, it deploys. No manual QA queues. No deployment tickets. No release trains.

Measurable quality. Not code coverage percentages (which lie — 88% of tests with passing coverage still miss critical defects). Mutation testing scores that prove your tests actually catch bugs. Architecture fitness functions that detect boundary violations. DORA metrics that show delivery health in real time.

This is not optional complexity. It is the foundation that makes AI multiplication work. Without it, you are just replacing one dysfunctional model with a smaller, faster dysfunctional model.

What Founders Actually Need to Measure

If you are currently outsourcing and evaluating whether this shift makes sense, stop looking at velocity reports and story points. Those are vanity metrics — team estimates in made-up units that tell you nothing about actual delivery.

Measure these instead:

Lead time. How long from "we decided to build this" to "a customer is using it"? If the answer is measured in months, your delivery system has a queue problem, not a capacity problem. Adding more outsourced developers will not fix it.

Deployment frequency. How often does code reach production? Daily means fast feedback and low risk. Monthly means batch releases, high risk, and long rollback times. Your outsourcing vendor's deployment frequency tells you more than their headcount.

Change failure rate. What percentage of deployments cause incidents? If you do not know this number, you do not have visibility into your engineering quality. Your vendor probably does not track it either.

Mean time to restore. When something breaks in production, how fast does it get fixed? If the answer involves filing a ticket with a vendor's operations team across a timezone gap, the answer is "too slow."

These four metrics — the DORA metrics — predict software delivery performance more reliably than team size, technology choice, or methodology. Elite performers deploy on demand with less than 5% failure rates and restore service in under an hour. Most outsourced teams do not measure these at all.

The Transition Is Not a Rewrite

The biggest risk founders see in this shift is the transition itself. "If we change our engineering model during a critical growth phase, we will break everything."

That fear is valid. And the answer is not a rewrite.

Start with diagnosis. Audit the existing codebase — not with opinions, but with data. Where are the circular dependencies? What is the actual test coverage quality (not the coverage percentage, but whether those tests catch real bugs)? How coupled are the components? What is the deployment pipeline maturity?

Then stabilise incrementally. Add characterisation tests around the riskiest modules — tests that document current behaviour before you change anything. Extract bounded contexts one at a time. Automate deployment for the least risky services first.

Only then accelerate. Once the codebase has the discipline foundation — clean boundaries, real tests, automated pipelines — AI agents can operate safely. The 3-engineer model becomes viable because the codebase supports it.

This is not a 12-month transformation programme. With focused effort, most teams see measurable improvement in lead time within 30-60 days. The transition is incremental, data-driven, and reversible at every step.

The Window Is Closing

Two years ago, the outsourcing model still made sense for many startups. Labour cost arbitrage was real, and AI coding tools were too immature to change the equation.

That window is closing fast.

Every quarter, AI agents get more capable at generating disciplined code — when the codebase supports it. Every quarter, the gap between a disciplined 3-person team and a 15-person outsourced team widens. The founders who make this shift now build compounding advantages: faster feedback loops, better code health, deeper product understanding.

The founders who wait are paying an increasing premium for a model that delivers decreasing value.

The math has changed. The question is whether your engineering model has changed with it.

The Bottom Line

Fifteen outsourced developers cost more, ship slower, and produce lower quality than three disciplined engineers with AI — but only when those engineers operate with TDD, clean architecture, and continuous delivery. The shift is not about replacing people with AI. It is about eliminating the handoffs, queues, and context loss that make outsourced delivery slow and expensive. Start with diagnosis, stabilise incrementally, then accelerate. The math has changed. The teams that move first win.